Homelab

I’ve had numerous Homelabs over the years all of which have been an incredibly valuable learning tool. This even includes a 1U Pentium-III rackmount server I purchased from eBay in 2002, at this point i’d never been near a “real” server and was super excited up to the moment where we turned it on for the first time. Me and my student housemates spent an age finding a location for the thing where it didn’t both make the house shake and we’d still be able to sleep.

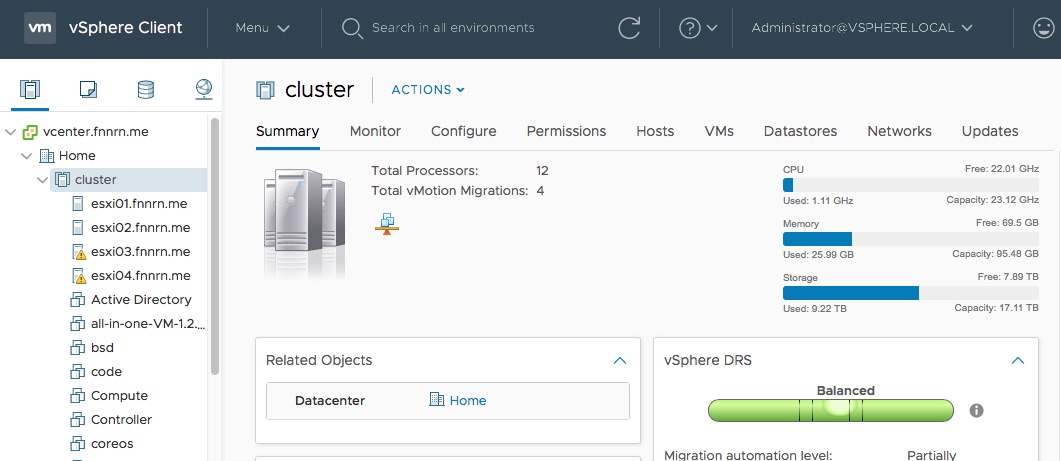

In 2013 I decided I wanted a homelab that would provide a usable vSphere environment (was working towards VCP/VCaP at the time) and also enough capacity to run a good few virtual machines for other technologies I wanted to learn.

I was in a relatively small flat where Kim was also working so I had a number of restrictions:

- Small footprint

- Minimal (if any) noise

- Relatively “average” power consumption

- Enough capacity and capability to run a good few VMs ~10 in a usable fashion

After doing some various calculations and looking at the options in the market (Nucs, Brix, free standing towers) I decided that a pair of the new ultra compact PCs should provide the functionality I needed.

Lab v1

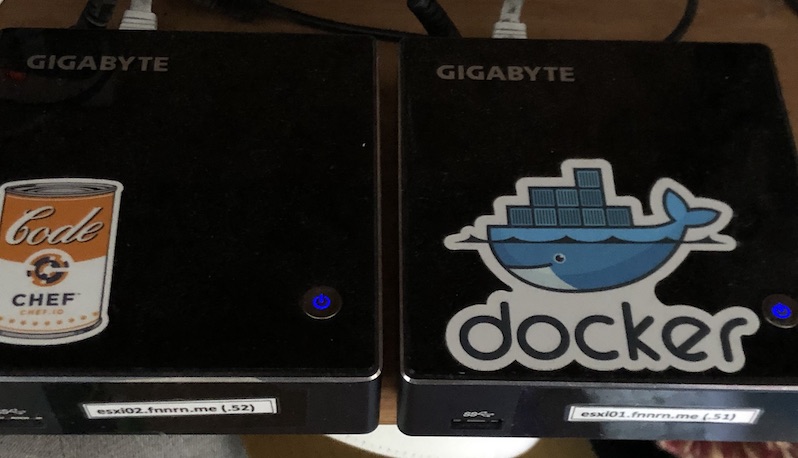

In the end I opted for a pair of Gigabyte Brix GB-XM11-3337 (i5 dual core) (identical HW as a comparable Intel Nuc, but slightly cheaper at the time).

I also maxed out the ram and added M.2 modules giving me the following:

- Dual core Intel(R) Core(TM) i5-3337U CPU @ 1.80GHz (+hyperthreading)

- 16GB DDR3

- 250GB M.2 2240 (half-length)

- vSphere 5.x

Both Brix were also backed by an NFS datastore allowing vMotion between the two physical hosts.

Over the last five years the two small systems have provided the capability to:

- Deploy enough of the VMware stack to acquire the VCP

- Hosted various Cisco software, unified compute system (UCS) platform to develop against

- The same with the HPE OneView datacentre simulator and virtual connect emulator

- The Docker EE stack

- A Highly Available kubernetes stack

- Endless other incomplete projects :-)

Lab v2

After the five years the Brix have had only had one issue, a fan died in one of the brix (repair was the princely sum of £3.99). However as of vSphere 6.7 the CPUs are no longer supported and as I try to automate a lot of quick VM creation/destruction events it has become obvious that they’re starting struggle with the workload. I was very excited to see that the latest Ultra compact PCs support a theoretical 64GB of ram, I was less excited to find out that the 32GB SO-DIMMs aren’t for sale.

I breifly looked at some of the SuperMicro E200 / E300 machines that are seemingly popular in the homelab community, but was again concerned around the fan noise in the home office. After giving up the wait on 32GB so-dimms I priced up some of the newest Brix and decided to pull the trigger on a pair of Gigabyte Brix GB-BRi7-8550 (i7 quad core).

With some additions I had the following:

- Quad core Intel(R) Core(TM) i7-8550U CPU @ 1.80GHz (+hyperthreading)

- 32GB DDR4

- 1TB M.2 2280 (full-length)

- vSphere 6.7

Deployment notes

vSphere installation

All nodes in the cluster boot from a USB stick in the rear port, leaving the internal disk available for VM storage and VM flash caching. To ease the installation I typically will mount the vSphere installation ISO and the USB Stick in VMware Fusion and just step through the installation steps (including setting the Networking configuration for vSphere). This typically means I don’t need to connect a screen/keyboard to the Brix I can just install and configure on my macbook and then move the USB stick over and boot away.

Network interface cards

The original 5.1 vSphere supported the NIC in the first two Brixs however it was subsequently removed as vSphere was updated. Luckily i’ve always gone through the vSphere upgrade path which doesn’t remove the previous Realtek r8168 NIC. The newer Brix come with an Intel I219-V interface, which is supported out of the box (apparently)..

Unfortunately vSphere will attempt to load the wrong driver for this interface, resulting in a warning in the vSphere UI (DCUI) that specifies that no adapters could be found.

To remedy this:

- The DCUI console needs to be enabled

- Switch to the correct TTY

- Disable the incorrect driver

esxcli system module set --enabled=false --module=ne1000 - Reboot

After this change, the Brix will make use of the ne1000e driver and will have connectivity.

All added together I’ve now a four node cluster (2 x 6.5, 2 x 6.7) that should provide me with enough capability to hopefully last out a good couple of years for my use-cases.