Balancing the API Server with nftables

In this second post we will cover the use of nftables in the linux kernel to provide very simple and low-level load balancing for the Kubernetes API server.

Nftables

Nftables was added to the Linux kernel in the 3.x release and was presumed to be the de-facto replacement of the often complained about iptables. However at the moment with Linux Kernels in the 5.x release it still looks like iptables has kept its dominance.

Even in some of the larger open source projects the move towards the more modern nftables appears to stall quite easily and become a stuck issue:

Nftables is either driven through the nft cli tooling or rules can be created through the netlink interface to provide a programmable driven approach. There are a few libraries that are in the process of being created to help address a programmable approach in various levels of development (see below in the additional resources).

There are a few distributions that have started to the migration to nftables, however to maintain backwards complexity a number of nftables wrappers have been created that allow the distro or an end user to issue iptable commands and have them translated to nftables rules.

Kubernetes API server load balancing with nftables

This is where the architecture gets a little bit different to the load balancers that I covered in my previous post. In the more common load balancers an administrator will create an load balancer endpoint (typically address and port) for a user to connect to, however with nftables we will be routing through it like a gateway or firewall.

Routing (simplistic overview)

In networking, routing is the capability of having networking traffic “routed” to different logical networks that aren’t directly addressable from the source host to the destination host.

Routing Example

- Source Host is given the address

192.168.0.2/24 - Destination Host is given the address

192.168.1.2/24

If we decompose the Source address we can see that it’s 192.168.0.2 and on the subnet /24 (typically shown through a netmask of 255.255.255.0). The subnet is used to determine how large the addressable network is, with the current subnet the source host can access anything that is 192.168.0.{x} (1 - 254)

Based upon the designated subnets neither network will be able to directly access one another, so we will need to create a router and then update the hosts so that they have routing table entries that specify how to access networks.

A router will need to have an address on each network in order for the hosts on that network to route their packets through so in this example our router will look like the following:

1 | ____________________________________________ |

The router could be a physical routing device or alternatively could just be a simple server or VM, in this example we’ll presume a simple Linux VM (as we’re focussing on nftables). In order for out Linux VM to forward packets to other networks we need to ensure that ipv4_forwarding is enabled.

The final step is to ensure that the source host has it’s routing tables updated so that it is aware of where packets need to go when they need to access the 192.168.1.0/24 network. Typically it will look like the following pseudo code (Each OS has slightly different ways of adding routes):

route add 192.168.1.0/24 via 192.168.0.100

This route effectively tells the kernel/networking stack that any packets addressed for the network 192.168.1.0/24 should be forwarded to the address 192.168.0.100, which is our router with an interface in that network range. This simple additional route now means that our source host can now access the destination address by routing packets via our routing vm.

I’ll leave the reader to determine a route for destination hosts wanting to connect to hosts in the source network :)

Nftables architectural overview

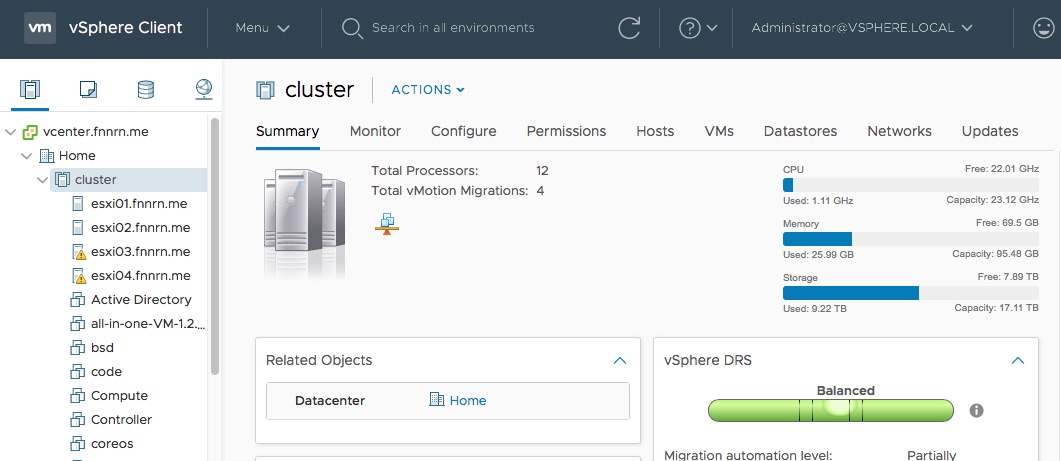

This section details the networks and addresses of the network i’ll be using, along with a crude diagram showing the layout.

Networks

192.168.0.0/24Home network192.168.1.0/24VIP (Virtual IP) network192.168.2.0/24Kubernetes node network

Important Addresses / Hosts

192.168.0.1(Gateway / NAT to internet)192.168.0.11(Router address in Home network)192.168.2.1(Router address in Kubernetes network)192.168.1.1(Virtual IP of the Kubernetes API load balancer)

Kubernetes Nodes

192.168.2.110Master01192.168.2.111Master02192.168.2.120Worker01

(worker nodes will follow sequential addressing from Worker01)

Network diagram (including expected routing entries)

1 | _________________ |

Using nft to create the load balancer

The load balancing VM will be an ubuntu 18.04 VM with the nft package and all of it’s dependencies, also we will ensure that ipv4_forwarding is enabled and persists through reboots.

Step one: Creation of the nat table

nft create table nat

Step two: Creation of the postrouting and prerouting chains

nft add chain nat postrouting { type nat hook postrouting priority 0 \; }

nft add chain nat prerouting { type nat hook prerouting priority 0 \; }

Note we will need to add masquerading for the post routing of traffic (so traffic gets back to the source)

nft add rule nat postrouting masquerade

Step three: Creation of the load balancer

nft add rule nat prerouting ip daddr 192.168.1.1 tcp dport 6443 dnat to numgen inc mod 2 map { 0 : 192.168.2.110, 1 : 192.168.2.111 }

This is quite a long command so to understand it we will deconstruct some key parts:

Listening address

ip daddr 192.168.1.1Layer 3 (IP) destination address 192.168.1.1tcp dport 6443Layer 4 (TCP) destination port 6443

Note

The load balancer listening VIP that we are creating isn’t a “tangible” address that would typically exist with a standard load balancer, there will be no TCP stack (and associated metrics). The address 192.168.1.1 exists only inside the nftables ruleset and only when traffic is routed through the nftables VM will traffic be load balanced.

This is important to be aware of, if we placed a host on the same 192.168.1.0/24 network and tried to access the load balancer address 192.168.1.1 we wouldn’t be able to access it as traffic on the same subnet is never passed through a router or gateway. So, the only way traffic can hit an nftable load balancer address (VIP) is if that traffic is routing through the host and being examined by our ruleset. Not realising this can lead to a lot of head scratching whilst contemplating what appears to be simple network configuration.

Destination of traffic

to numgen inc mod 2Use a random number generator between two numbersmap { 0 : 192.168.2.110, 1 : 192.168.2.111 }Our pool of backend servers that the random number generator will select from

Step four: Validate nftables rules

nft list tables

This command will list all of the nftables that have been created, at this point we should see out nat table

nft list table ip nat

This command will list all of the rules/chains that have been created as part of the nat table, at this point we should see our routing chains and our ruleset watching for incoming traffic on our VIP and the destination hosts.

Step five: Add our routes

Client route

The client here will be my home workstation, and will need a route adding so that it can access the load balancer VIP 192.168.1.1 on the 192.168.1.0/24 network:

Pseudo code

route add 192.168.1.0/24 via 192.168.0.11

Kubernetes API server routes

These are needed for a number of reasons, the main reason here though is that kubeadm will need to access the load balancer VIP 192.168.1.1 to ensure that the control plane is accessible.

Pseudo code

route add 192.168.1.0/24 via 192.168.2.1

Using Kubeadm to create our HA (load balanced) Kubernetes control plane

At this point we should have the following:

- Load balancer VIP/port created in nftables

- Pool of servers defined under this VIP

- Client route set through the nftables VM

- Kubernetes API server set to route to the VIP network through the nftables VM

In order to create a HA control plane with kubeadm we will need to create a small yaml file with the load balancer configuration detailed. Below is an example configuration for Kubernetes 1.15 with our above load balancer configuration:

1 | apiVersion: kubeadm.k8s.io/v1beta1 |

Once this yaml file has been created on the file system of the first control plane node we can bootstrap the kubernetes cluster:

kubeadm init --config=/path/to/config.yaml --experimental-upload-certs

The kubeadm utility will run through a large battery of pre-bootstrap tests and checks and will start to deploy the Kubernetes control plane components. Once they’re deployed and being started the kubeadm utility will attempt to verify the health of the control plane through the load balancer address. If everything has been deployed correctly then once the control plane components are healthy then traffic should flow through the load balancer to the bootstrapping node.

Load Balancing applications

All of the above notes cover using nftables to load balance the Kubernets API server, however the above example can easily be used in order to load balance an application that is being ran within the kubernetes cluster. This quick example will use the Kubernetes “Hello World” example (https://kubernetes.io/docs/tasks/access-application-cluster/service-access-application-cluster/) (which i’ve just discovered is 644MB ¯\_(ツ)_/¯) and will be load balanced in a subnet that i’ll define as my application network (192.168.4.0/24).

Step one: Create “Hello World” deployment

kubectl run hello-world --replicas=2 --labels="run=load-balancer-example" --image=gcr.io/google-samples/node-hello:1.0 --port=8080

This will pull the needed images and start two replicas on the cluster

Step two: Expose the application through a service and NodePort

kubectl expose deployment hello-world --type=NodePort --name=example-service

Step three: Find the exposed NodePort

kubectl describe services example-service | grep NodePort

This command will show the port that Kubernetes has selected to expose our service on, we can now test that the application is working by examining the Pods to make sure they’re running kubectl get deployments hello-world and testing connectivity with curl http://node-IP:NodePort.

Step four: Load Balance the service

Our two workers (192.168.2.120/121) are exposing the service through their NodePort 31243, we will use our load balancer to create a VIP of 192.168.4.1 that is exposing the same port 31243 that will load balancer over both workers.

1 | nft add rule nat prerouting ip daddr 192.168.4.1 \ |

Note: Connection state tracking is managed by conntrack and is applied on the first packet in flow. (thanks @naadirjeewa)

Step five: Add route on client machine to access the load balanced service

My workstation is on the Home network as defined above and will need a route adding so that traffic will go through the load balancer to access the VIP. On my mac the command is:

sudo route -n add 192.168.4.0/24 192.168.0.11

Step six: Confirm that the service is now available

From the client machine we can now validate that our new load balancer address is both accessible and is load balancing over our application on the Kubernetes cluster.

1 | $ curl http://192.168.4.1:31243/ |

Monitoring and Metrics with nftables

The nft cli tool provides capability for debugging and monitoring the various rules that have been created within nftables. However in order to create any level of metrics then counters (https://wiki.nftables.org/wiki-nftables/index.php/Counters) will need defining on a particular rule. We will re-create our Kubernetes API server load balancer with a counter enabled:

1 | nft add rule nat prerouting ip daddr 192.168.1.1 tcp dport 6443 \ |

If we look at the above rule we can see that on the second line we’ve added a counter statement that is placed after the VIP definition and before the destination dnat to the endpoints.

If we now examine the nat ruleset we can see that counter values are being incremented as the load balancer VIP is being accessed.

1 | nft list table ip nat | grep counter |

This output is parsable although not all that useful, the nftables documentation mentions snmp but i’ve yet to find any real concrete documentation.

Additional resources

- https://wiki.nftables.org/wiki-nftables/index.php/Main_Page (NFT Wiki)

- https://github.com/google/nftables (Googles nft Go package (not supported by google currently))

- https://github.com/sbezverk/nftableslib (Go Package enhancing the Google NFT package)

- https://github.com/zevenet/nftlb (C library and API for managing nftables load balancers)